By the time a talent acquisition leader is comparing assessment providers, she’s already heard three pitches that sound identical. They all say 'validated.' They all say 'culture fit.' Everyone sports case studies with impressive numbers. The presentations blur together.

The question at this point isn’t whether to use assessments. The question is how to tell the difference between a real partner and a polished presentation.

These eight questions help. They’re the questions we’d want asked of us – and of anyone else in the room. Because the people this decision ultimately affects isn’t sitting in the demo. They're on the floor, behind the counter, at the bedside, or intensely focused on a computer, waiting to work alongside whoever gets hired next. The hiring assessment a provider builds will shape who that person turns out to be.

Psychometric Assessments require eight "Yes!" answers to these questions

From our five decades working with organizations across industries, we have developed a suite of assessments that predict performance and results – helping your teams hire to consistent stafndards and strengthen your culture. Delivering sound and full answers to each of the questions that follow are core to how we support the success of the organizations we serve.

1. Are your assessments validated? What is your approach ?

Validation is the word every provider uses. It’s also the one most likely to mean different things in different rooms.

What it should mean: the assessment predicts actual job performance. Not that the test items correlate with each other internally, but that people who score well go on to do the work well – stay longer, perform stronger, protect the customer experience in the moments that matter. A validated assessment is the difference between a hire who reads a frustrated guest accurately and one who makes it worse.

A strong answer includes specifics. What performance data does the provider validate against? Tenure. Supervisor ratings. Leading indicators like attendance and training completion. Lagging indicators like retention, sales, and satisfaction scores. If the answer stays at “our tests are statistically reliable,” keep asking.

We validate against real KPIs – not once, but continuously. We actively seek performance data from every client partnership because it lets us set custom benchmarks. At one of our restaurant clients, candidates scoring above average on our assessment were 91% more likely to exceed performance expectations in their first six months. That number didn’t come from employees an unrelated job or industry. It came from the floor.

Here are some meaningful sources of data for validating an assessment:

- Tenure, or time employed: are assessments linked to longer tenure?

- Supervisor ratings of performance: ideally from a well-designed performance review built around your competencies. In addition, we frequently build these surveys to deploy to supervisors, asking core questions about behavior and results that should be influenced by assessment performance.

- Leading indicators: behaviors and records that show us moving in the right directions towards the lagging indicators that matter. This could include time and attendance data, time in training, or records of other core behaviors that drive results.

- Lagging indicators: sales, retention/turnover rates, total controllable income, and other savings.

Our assessments are validated for the competencies that drive performance. Competencies are the clusters of knowledge, skill, ability, personality and values, and other characteristics that drive results. Ultimately, the assessments you use should be shown to demonstrate results in roles similar to your own and with the competencies that define success.

Some of the most critical skills and competencies, both from our own work and the workforce at large, include -

- Critical Thinking

- Collaboration

- Leadership

- Adaptability

- Creativity and Curiosity

- Customer Service

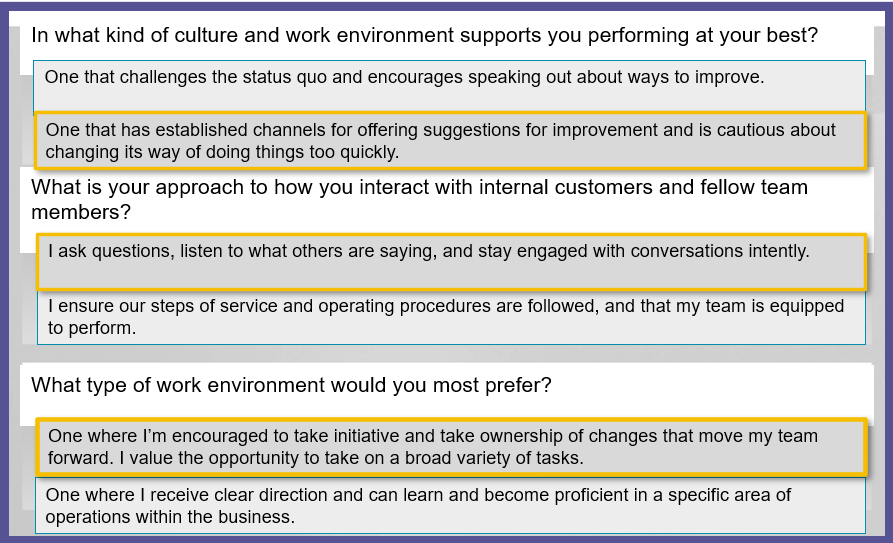

2. How will the assessment be built? How will it be customized to our specific needs for performance and culture?

A generic assessment screens for generic traits. But the behaviors that define a great customer interaction at a quick-service restaurant are different from those at a hospital or a luxury hotel. The specificity matters – not as a feature, but as the mechanism that connects the assessment to what actually happens in your operation.

Listen for whether the provider starts with your world or with their catalog. Does the conversation begin with your performance dimensions, your culture, your definition of success? Or does it begin with a product demo?

We start with your team. We consult on the competencies that drive performance and culture in your specific context, then customize assessments to measure the potential to perform, stay, and live your values. Situational items reference your operations – the actual scenarios your people face. The validated skeleton stays intact. The experience speaks your brand. We can even build a realistic job preview that educates candidates about what the role genuinely requires, so the people who move forward are choosing your organization with clear eyes.

Additionally, our assessment results can be customized with competencies and performance-based narratives that speak your language, helping to secure buy-in and support for the solution, while ensuring that you are hiring to meaningful and consistent standards.

3. Is the candidate experience engaging? What is your candidate completion rate?

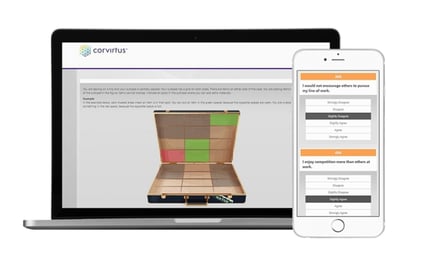

The assessment is often a candidate’s deepest interaction with an organization before day one. Deeper than the job posting. Deeper than the application. For twenty or thirty minutes, she’s being asked to demonstrate who she is and how she thinks.

How an organization treats a person during that window signals something. A thoughtful, well-designed experience says: we take this seriously, and we take you seriously. A clunky one says the opposite. And that signal doesn’t expire on the start date. The person who eventually gets hired carries that first impression into how she approaches the work – and the people she serves.

Ask about completion rates. Below 75 percent is worth investigating. Ask whether the provider has data on how candidates perceive the experience – not just whether they finish, but whether they felt it was fair. Ask about length. An assessment that’s too long frustrates candidates. One that’s too short doesn’t give them enough room to demonstrate who they are, and they notice.

Our completion rates can exceed 90 percent. Our assessments use varied question types – personality, situational, cognitive – at lengths calibrated to the role. Candidates see a progress bar throughout. They leave believing they were evaluated fairly and received the opportunity to perform. At Krispy Kreme, that approach handles 80,000 annual applications while maintaining completion rates above 90%.

![]()

4. Is the candidate experience mobile responsive?

While we’re on the topic of candidate experience, all of our assessments are mobile responsive and optimized. We know that more than 50% of candidates, especially those applying for entry-level positions, begin the process on a mobile device. Candidates can complete the entire assessment on a mobile device, tablet, or computer. They can also start on one device and finish on another with the link they've received. And, because our assessments are mobile responsive, the content (i.e., questions and response options) will automatically adjust to fit the size of the screen that the candidate is using, resulting in a candidate-friendly interface.

While we’re on the topic of candidate experience, all of our assessments are mobile responsive and optimized. We know that more than 50% of candidates, especially those applying for entry-level positions, begin the process on a mobile device. Candidates can complete the entire assessment on a mobile device, tablet, or computer. They can also start on one device and finish on another with the link they've received. And, because our assessments are mobile responsive, the content (i.e., questions and response options) will automatically adjust to fit the size of the screen that the candidate is using, resulting in a candidate-friendly interface.

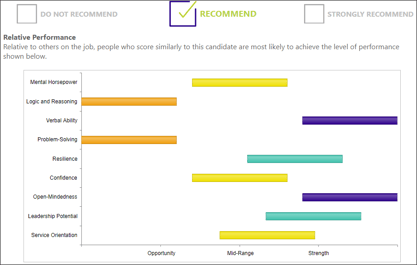

5. Are assessment reports tailored to the needs of our recruiters and hiring managers?

Our reports are built for operators, recruiters, and managers. Unlike many assessments on the market, there is no extensive training or certification required to understand our assessment results. Our results are designed with our users in mind – operators, recruiters, and managers – to provide all of the information you need, when you need it. You're immediately provided with an overall recommendation for the candidate, as well as how the candidate performed on the different competencies measured by the assessment. Additionally, users are able to view information for each competency that describes the candidate’s expected behavior and potential to perform, if hired. The information in the assessment result is designed to streamline and inform your decision making process by providing that overall recommendation and competency-based strengths and opportunities. Beyond hiring, the assessment result provides the user with information on how to support the candidate’s success if they are hired. Our assessment results are web-based and scored immediately after a candidate submits their assessment. Results can easily be printed out or shared with others electronically.

Our reports are built for operators, recruiters, and managers. Unlike many assessments on the market, there is no extensive training or certification required to understand our assessment results. Our results are designed with our users in mind – operators, recruiters, and managers – to provide all of the information you need, when you need it. You're immediately provided with an overall recommendation for the candidate, as well as how the candidate performed on the different competencies measured by the assessment. Additionally, users are able to view information for each competency that describes the candidate’s expected behavior and potential to perform, if hired. The information in the assessment result is designed to streamline and inform your decision making process by providing that overall recommendation and competency-based strengths and opportunities. Beyond hiring, the assessment result provides the user with information on how to support the candidate’s success if they are hired. Our assessment results are web-based and scored immediately after a candidate submits their assessment. Results can easily be printed out or shared with others electronically.

Check out this quick video on our assessment results.

6. Are your hiring assessments legally defensible?

Ask about track record. Ask about adverse impact monitoring, and alignment with the Uniform Guidelines on Employee Selection Procedures. Ask whether the provider collects voluntary demographic data to monitor for disparate impact – proactively, not reactively.

Ask about track record. Ask about adverse impact monitoring, and alignment with the Uniform Guidelines on Employee Selection Procedures. Ask whether the provider collects voluntary demographic data to monitor for disparate impact – proactively, not reactively.

In four decades, our assessments have never been called into legal question. That’s not an accident. It’s the result of building every assessment with compliance embedded from the first item – not bolted on after the fact. Our assessments are meet EEOC guidelines and provide an inclusive opportunity for neurodiverse candidates. We can collect voluntary protected-class information – separate from the assessment – so we can monitor continuously. Not because we’re required to. Because it’s how a system should be built.

Combined, these features, and our intense validation, support legal defensibility and create an experience that candidates view as engaging, fair, and something that enhanced their ability to perform. This is supported by our high completion rates.

7. Do you partner with us to document return-on-investment?

Turnover is expensive. That’s not new information. What’s less obvious is what turnover actually costs the customer. Every departure puts a less-experienced person in front of the guest, the patient, the client. Institutional knowledge walks out. The replacement is learning on the job while the customer absorbs the gap. The real return on a validated assessment isn’t just the money saved on rehiring. It’s the consistency of the experience your customers receive, month after month, because the people delivering it were selected for the right reasons.

Ask whether the provider measures ROI against your KPIs or just their own benchmarks. Ask whether they’ll link assessment results to tenure, customer satisfaction, and performance outcomes specific to your organization – not industry averages.

We link assessment performance to your data. Turnover, satisfaction, controllable income, performance measures that matter to your culture. We actively seek this work because it lets us show – in dollars and in outcomes – what the assessment is doing. One client found that employees who failed the assessment but were hired anyway were twice as likely to be terminated. At PDQ, managers who passed our assessments were ten times better at problem-solving and five times more likely to build high-performing teams. Those numbers came from the partnership – from tracking what happened after the hire, not just during it.

All of our assessments are validated, but what about demonstrating results for your unique enterprise in dollars? As part of our partnership with you, we measure the return-on-investment of our assessments by linking assessment results to your own key performance indicators, like turnover, customer/stakeholder satisfaction and outcomes, and performance measures valuable to your culture. We relish the opportunity to show our clients the impact our assessments have when it comes to hiring and retaining top performers, and on their bottom line.

To this point, one of our clients with locations across the country found that employees who failed the assessment but were hired were twice as likely to be terminated.

After just one year of implementation, Corvirtus assessments have helped companies achieve the following:

8. Will we have dedicated members of your team to provide support?

When something breaks at 7 AM on a Monday – a candidate locked out, a manager who can’t pull a report, a new location that needs to go live – the downstream consequences are immediate. A candidate walks away. A manager makes a gut-feel hire. A customer gets served by someone who shouldn’t have been in the role.

Ask whether you’ll have a dedicated contact or a ticket queue. Ask whether you’ll have access to practitioners and psychologists, not just account managers. Ask what happens when a candidate – not just the buyer – needs help.

Ticket systems are not our thing. Every client has a dedicated person they can reach at any time, plus access to I-O psychologists and practitioners. Phone or email. Addressed immediately. We extend that to your employees and candidates, not just to you – because the person who needs help at 7 AM on a Monday is often the person standing between your customer and a bad experience.

The talent acquisition leader who started with three identical-sounding pitches now has a framework for telling them apart. Eight questions. Eight places where the answer reveals whether a provider understands what’s actually at stake.

Because the person this decision affects most will never see an assessment score, read an ROI report, or sit in a vendor demo. She’ll walk in the door, interact with the person who got hired, and form an opinion about the organization in about ninety seconds. The assessment that selected that employee is either working for her – or it isn’t.

Take the next step - experience Corvirtus assessments for a free trial for your next hire.

A Helpful Glossary of Hiring Assessment Terminology

ADA compliance – Adherence to the Americans with Disabilities Act, ensuring assessment design accommodates candidates with disabilities including visual impairments, color-blindness, and neurodiverse conditions without compromising measurement validity.

Adverse impact monitoring – The practice of collecting and analyzing demographic data to proactively identify whether an assessment systematically disadvantages candidates from protected classes, separate from the hiring decision itself.

Competency-level detail – A breakdown in assessment reporting that shows how a candidate performed on each specific component of skills, behaviors, and/or trait being measured, rather than only an overall score.

Completion rates – The percentage of candidates who begin and finish an assessment; rates below 85% typically indicate problems with candidate experience or assessment design.

Culture-fit – The alignment between a candidate’s values, behaviors, and work style and the organization’s stated values and operational norms.

Custom benchmarks – Performance thresholds and scoring standards calibrated to an individual organization’s actual operational data and performance outcomes, rather than industry-wide averages.

Disparate impact – The unintended consequence of a neutral hiring practice that systematically excludes or disadvantages members of a protected class at significantly higher rates than other groups.

Embedded compliance – The integration of legal and regulatory requirements into assessment design from inception, rather than adding compliance features after the assessment is built.

I-O psychologists – Industrial-organizational psychologists who specialize in applying psychological science to workplace problems, including assessment design and validation.

Institutional knowledge – The accumulated understanding, procedures, and problem-solving approaches that experienced employees carry and typically lose when they depart.

Intrinsic motivation – Internal drive and engagement that comes from finding the work meaningful, rather than external rewards; signaled by how a candidate perceives and responds to the assessment experience.

KPIs – Key Performance Indicators; measurable values that show how effectively an organization is achieving its objectives, such as retention, sales, or customer satisfaction.

Lagging indicators – Performance metrics that measure outcomes after the fact, such as retention rates, sales figures, or customer satisfaction scores.

Leading indicators – Early-stage performance metrics that predict future outcomes, such as attendance, training completion, or time-to-productivity.

Neurodiverse candidates – Job applicants with neurological differences such as autism, ADHD, dyslexia, or other conditions that affect how they process information and interact with assessments.

Realistic job preview – An assessment component that educates candidates about the actual demands, scenarios, and environment of the role before they are hired, enabling informed decision-making.

Situational items – Assessment questions that present realistic workplace scenarios and ask candidates how they would respond, designed to measure decision-making and behavioral tendencies in context.

Supervisor ratings – Performance evaluations provided by direct managers, used as one form of validation data to confirm that candidates scoring well on assessments actually perform well on the job.

Tenure – Length of time an employee remains with an organization; used as a validation metric because retention indicates both job fit and assessment accuracy.

Uniform Guidelines on Employee Selection Procedures – Federal guidelines that establish standards for the validity and fairness of employee selection tools, ensuring they measure actual job-related competencies without discriminatory impact.

Validated assessment – An assessment proven through empirical research to predict actual job performance and outcomes—demonstrated by showing that candidates who score well go on to perform strongly, stay longer, and protect customer experience.

Voluntary demographic data – Self-reported information about protected characteristics (race, ethnicity, gender, disability status, etc.) that candidates provide separately from the assessment itself to enable bias monitoring.